We should regularly user test prototypes and wireframes to guarantee a great user experience. Here’s how to get started!

User testing prototypes and wireframes is vitally important. Why? For the exact same reason that we make prototypes and wireframes in the first place: to create amazing UX. Creating an exceptional UX ensures our product is doing what we want it to do – to satisfy users’ needs. And so, time to bring out your favorite wireframe tool and get going!

However, if we want our product to have a great UX, we need to ensure it has the highest degree of usability possible. You’re more likely to achieve that by regularly user testing prototypes and wireframes.

In this post, we’ll examine the benefits that you can get from regularly user testing prototypes, as well as take a look at what the typical user testing session looks like, along with some tips to get the most out of each session. Enjoy!

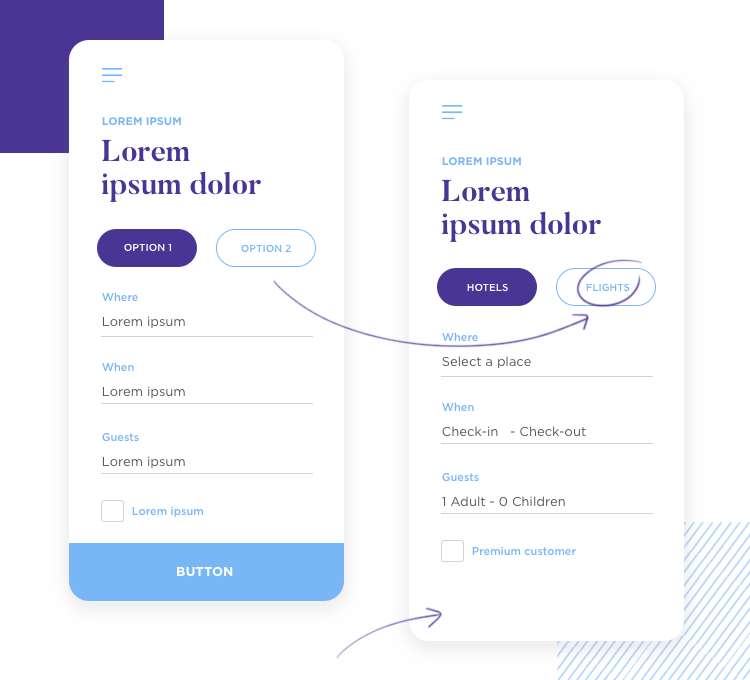

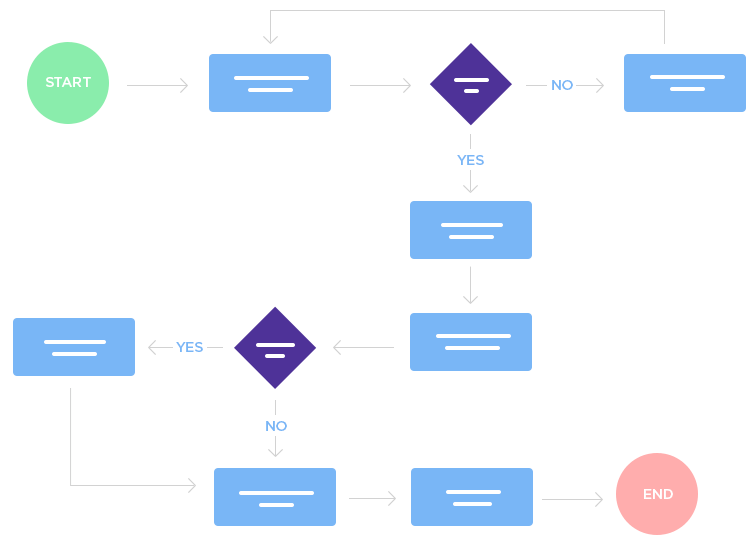

Fans of the “test often” agile methodology advocate regular testing because it means you break down the entire project into smaller, more digestible chunks for you and the test users.

For example, user testing wireframes allows your users to try out the most basic functions of your product, such as navigation, information architecture and information flow. As the fidelity increases and you get to the prototyping stage, you can test out more metrics with your users, such as interaction and color schemes.

Regularly user testing prototypes is also important for keeping the whole design team on the same page as the user, as it helps them to consistently bear them in mind every step of the way.

It’s therefore important that user testing is integrated into each and every iteration on your product. Regular testing should occur throughout the various stages of the development cycle, from the wireframe stage, right up to the prototype stage.

User testing prototypes and wireframes is a sure-fire way to ensure that you integrate a great UX into your product from the start. It enables you to do this by integrating UX requirements and scenarios early in the game and test them out. Here are just a few of the benefits you can expect to get:

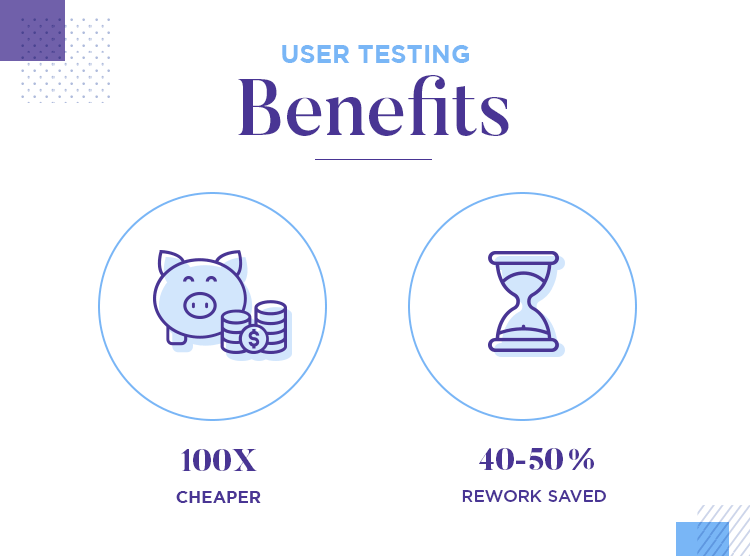

Fixing the features of your product at the end of the product development stage because they don’t work and users are rejecting them is going to be costly. That’s because development time costs significantly more, making changes at this point extremely expensive.

According to the Nielsen Norman group, making changes to features at this advanced stage can be up to 100 times more expensive! Don’t wait until a feature is coded before fixing it – catch it early and solve it in a few clicks!

If that wasn’t enough to convince you, according to IEEE, you can save up to 40-50% of rework time in the development lifecycle by user testing prototypes in the early stages of development.

Did we mention that user testing prototypes and wireframes is also a great way of involving your stakeholders and keeping a smile on their faces?

User testing helps to validate your design decisions and helps demonstrate the effectiveness of the assumptions you gathered from your user personas. It also helps reinforce the direction you’re taking with the product’s design and development.

Furthermore, by user testing wireframes and prototypes, you can gain a variety of insightful reports and metrics that show solid factual evidence to back up design choices (we’ll go into more detail about metrics below). Facts resonate with stakeholders!

User testing prototypes and wireframes can also be a very strong pull for external investors. Why? Because it shows you’ve done the necessary market research and have an adequate roadmap backed by empirical evidence.

Once investors see that your product provides an actual solution to users, they may be more likely to invest in your solution.

Before you start looking at the machinations of user testing prototypes, it’s important to first be aware of the limitations. What do we mean by limitations? Depending on the fidelity of your prototype or wireframe, the results obviously won’t be the same as the coded, live version.

Take copy as an example. If you have copy that’s under-developed, and are using placeholder text such as Lorem Ipsum (or a Lorem Ipsum alternative), it’s important that you decide what the minimal amount of necessary copy is. That way, your users can understand and find their way around your prototype or wireframe.

In other words, when it comes to important menu and button options, as well as inline form validation, make sure the copy is something the user understands and finds intuitive. Also take into account the lack of interaction and color scheme if you’re user testing a wireframe.

With these considerations in mind, here are the main pillars of a usability test:

- Sample users

- Instructions

- Moderator

- Observers

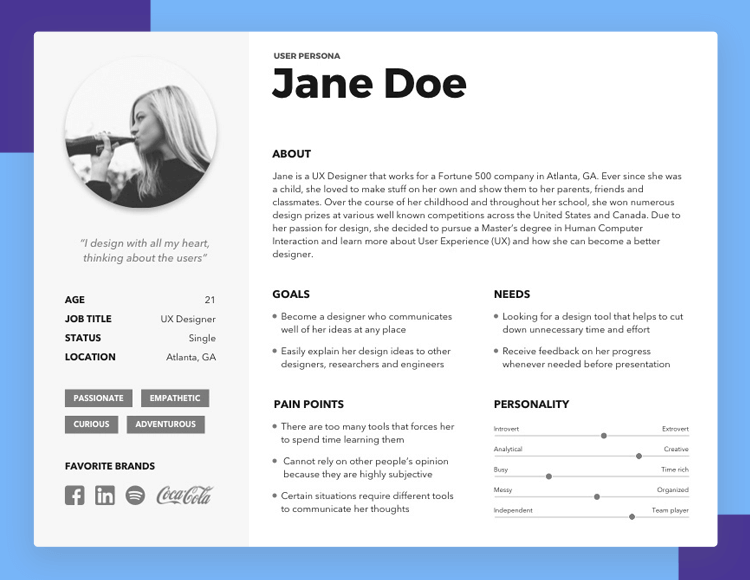

When we talk about sample users, we’re referring to the target group of users or demographic that you’ll be user testing wireframes and prototypes on. A very effective way to establish your prime userbase is to recruit users based on user personas. Another way of doing this is to use a user testing tool that automatically recruits sample users based on the kind of product you’re developing.

User Pesona Black & White by Geunbae "GB" Lee

When it comes to how many users you should test with, Jakob Nielsen believes 5 is the optimum number of users. According to Jakob, if you include any more than this number, you risk repeating data and wasting time. The premise for this is that zero users give zero insights, whereas with one, you’re already getting an exponential amount of data.

Carefully planned instructions are major success factors in user testing prototypes and wireframes.

Firstly, you should explain to your users that what they are working with is a prototype or wireframe, even if they’ve participated in similar sessions before. Secondly, you should make it crystal clear that it’s the wireframe or prototype you’re testing, not them.

You’ll then need to create a scenario complete with instructions for your users to follow during the test. An example of a scenario might be where you want your users to sign up and create an account with your recipe app, and then find a recipe and rate it.

There are two types of scenario instructions that you can provide in this case: open and closed. An open scenario gives a big picture instruction, such as:

“Register for a new account, then find a recipe that takes less than one hour and give your opinion on it”.

Conversely, an example of a closed scenario instruction is much more specific and looks more like the following:

“Go to “register”, click “sign-up”, enter details and then navigate to ”recipes”

For the purpose of user testing prototypes and wireframes, we highly recommend going with an open scenario as it’s the best way to determine the intuitiveness and usability of your product, rather than guiding your users by the hand.

Having an experienced moderator who facilitates the user testing session is helpful for securing feedback from the users and clarifying any instructions. A good moderator has an in-depth knowledge of the users’ habits and behaviors, gives clear instructions and is able to get the users through the whole process successfully from start to finish.

On the other hand, you can also user test prototypes without a moderator. Doing this will help you save time and money. It also allows you to test in any location at any time. However, you’ll need to ensure that your users are left with very clear instructions to complete the test.

Why is it important to have observers sit in while user testing prototypes or wireframes? Because your moderator may already be busy ensuring that the whole testing process goes without any hiccups.

“Emotions affect the bottom line in business.”

Having someone else to observe and take note of users’ behavior, body language and reactions is extremely important. It’s crucial data because, as IDF states, satisfaction is among the most important usability metrics: “emotions affect the bottom line in business.”

First of all, you’ll need to establish what parts or screens of your prototype or wireframe you need to test. You should ideally be focusing on crucial aspects such as login, signup and payment forms, navigational design and conversion funnels.

You’ll then need to break the test up into a series of tasks or questions. Mostly, when user testing prototypes and wireframes, you’ll put your users through around five tasks and make them answer up to seven questions. That way you can have a follow up question for every task along with two more at the end. The normal timeframe for a user testing session is between 60-90 minutes.

When user testing prototypes and wireframes, you need to make sure that you allow for alternative navigation paths. Why? Because if you guide the user through the navigation path that you want them to go through, you can’t prove that the user can intuitively reach their goal on their own with your product.

Remember, once your product is on the market, they won’t have your guiding hand to help them and by that time, it will be too late to make any changes that won’t cost an arm and a leg.

Allowing for alternative navigation paths, even if it just ends with a “go back to start” message, allows you to discover the click stream that’s naturally intuitive for your users. If the original navigation path didn’t go as planned, then you know it’s something you have to work on in further iterations.

Even if you’re a pro with years of experience user testing prototypes, we’d recommend never improvising during test sessions. To ensure the best outcome and that you’re getting the maximum benefit from the time and money invested, always have a script prepared.

Preparing a script beforehand will help everything to run smoothly and give structure to your user testing sessions. It will also help you remember exactly what to say and how to prompt your users during each step of the testing session.

In a typical script, you’ll include notes to yourself, steps and stages of the test, an introduction of the test as well as closing remarks.

As with many things when user testing prototypes, this one’s also optional but highly recommendable. Running a pilot test before you do the real thing will help you unearth any holes in your test and help you solve anything that could go wrong before the real thing. You can easily test the process out with your colleagues before your actual users.

Additionally, by running a pilot test session, you can be safe in the knowledge that your script runs smoothly and that everything makes sense.

When you want to convince your stakeholders, investors or clients that your product is usable, simply saying “all our users loved it and had a great time” isn’t going to make a huge impression. What they want to see is solid evidence.

Likewise, if the user testing session yielded less-than-favorable, stakeholders will want a little more detail than “they just didn’t like the UI”. They want to know exactly how far they are from the goal (hopefully a usable product).

It makes sense then to have some basic but fundamental metrics to help give an idea of where you’re at in the development process and to help point towards possible design solutions. According to Usability Geek, some commonly used metrics are effectiveness, efficiency and satisfaction.

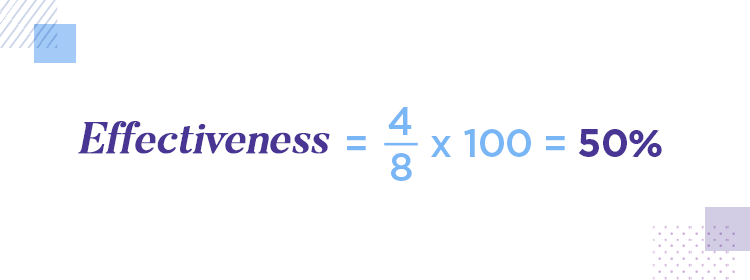

There’s quite an effective metric that happens to be called “effectiveness” and it’s measured by what folks at Usability Geek call the “completion rate”. The completion rate is a prolific usability metric that’s popular because it’s easy to work out. All you need to do is assign a value of “1” to each completed task and – you guessed it – “0” to each uncompleted one.

Then, simply divide the number of successfully completed tasks by the total amount of tasks and multiply by 100 to come up with the rate. Let’s say you user test your prototype and give your testees 8 tasks, of which they manage to complete 4. You can work out the completion rate with this equation:

Performing that simple math gives you a completion rate of 50%. Of course, our goal is 100%, but this is heavily dependent on the context of the test, along with the stage you’re currently at in the product development cycle. It’s important to note that you can calculate the completion rate for effectiveness at any stage during the cycle.

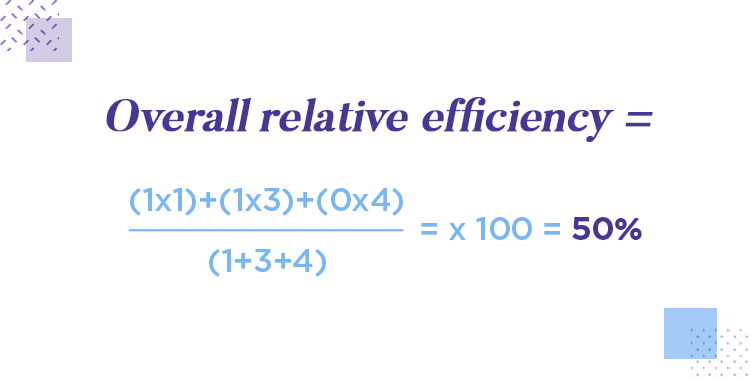

Efficiency is a large factor in usability and plays a big part in great UX. Therefore, another helpful metric to have up your sleeve is the “overall relative efficiency rate”. This rate measures two things: the number of users who completed a task and the time they took.

Let’s say you have three testees trying to complete a task with your wireframe or prototype. One user takes one second to complete it. One takes three seconds and the other takes four seconds before abandoning it.

In this case, we multiply each user by the time it took to complete the task and add the results. We then divide it by the sumtotal of the time each user spent and multiply it by a hundred. All that rigmarole should look like the following:

This slightly more advanced equation gives you an overall efficiency rate of 50%, meaning we’re only about halfway towards having an efficient product.

We can’t emphasize enough how much satisfaction matters in terms of UX. And good usability isn’t just about smooth design and logistics, it’s also about making the user happy with your product.

That’s why it’s always useful to use a satisfaction questionnaire after each task when user testing prototypes. Something short and simple should do, like a question such as “how easy was this task”. That could be followed by a scale with 5 radio buttons ranging from “very easy” to “difficult”.

Using these mini questionnaires is a great way to gauge your users’ emotions after each task they complete with you wireframe or prototype.

When user testing your prototypes and wireframes, you’ll want to generate as much data as possible and analyze it. Users can be a goldmine of information about how intuitive your product’s design really is. Every issue they have – even where they look – can tell you a lot about what you need to work on in further iterations. You can even use your testing sessions to compare the usability of your product with that of a competitor! Ideally, you want a prototyping tool that integrates with your favorite testing tools for efficient testing.

Let’s start with product discovery. It’s one of the most basic analysis techniques you can use when you user test your prototypes and wireframes. It helps you mark all potential issues facing your users during the session. This is where the moderator comes in.

Having an experienced moderator to monitor all the users’ actions, including speech and behavior can help you pick up on the less obvious problems with your prototype or wireframe. These might include high stress levels, lack of satisfaction or boredom.

Eye tracking software definitely has its place in user testing prototypes and wireframes. Where the user is clicking and where they’re looking is not always quite the same.

What’s more, tracking the paths your users’ eyes follow helps you unearth more about the psychology behind the navigational flow of your product. It also helps you discover any potential barriers to conversion.

You might think your UI layout has great usability. But the truth is, it might just be an “okay” usability. The only way to know whether or not the usability of your product pales or triumphs in comparison to its potential competition is to run a parallel usability test with an existing product on the market.

This would involve simply having two groups of users doing the exact same thing, with one testing out your wireframe or prototype and the other using the competitor’s product. Afterall, it’s always wise to know exactly what you’re up against.

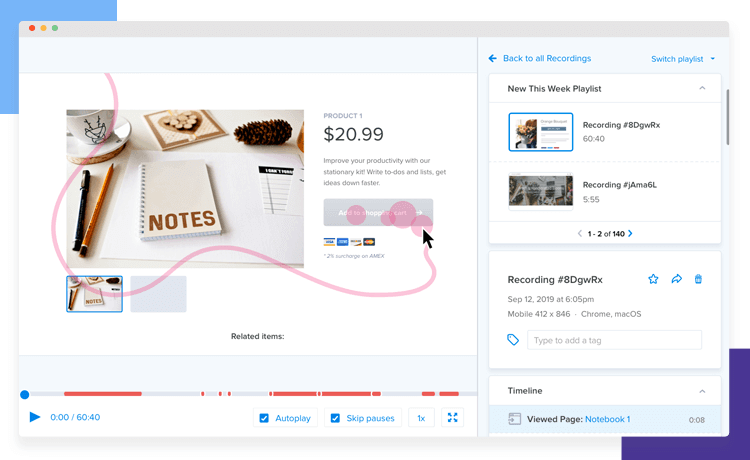

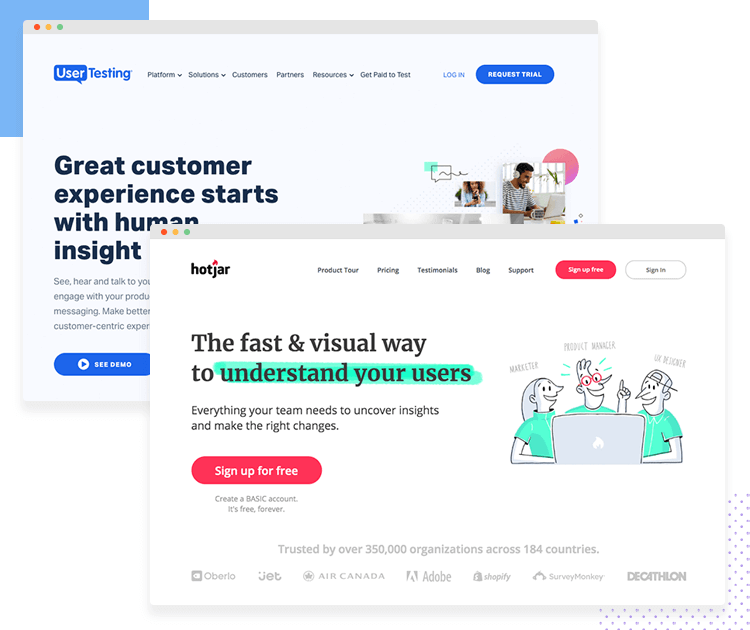

Lastly, before user testing prototypes and wireframes, you should take a moment to consider some of the great usability testing tools out there on the market. These can help save you a lot of time and finickity work in the lead up to, during and after the test.

Most usability testing tools offer remote user testing that’s either moderated or unmoderated. Among the benefits of using a usability tool are automatically generated reports. This saves a lot of time as the analysis is already done for you.

Most usability testing tools also take care of recruiting users based on your user personas and chosen demographic as well as compensating users for their time.

Furthermore, if you’re a Justinmind user, you’ll find that our prototyping tool has many usability testing tool integrations with some of the most popular ones on the market! To learn how to use them with our tool check out our guide on Justinmind’s integration with user testing tools.

Regularly user testing prototypes is the only way to guarantee that your product is really solving a problem and will make a difference in your user’s lives. It’s also the best way to avoid expensive reworks from the developer’s department.

When it comes to how often you should user test your prototypes and wireframes, the only real definitive answer is “as much as possible.” Or at least as much as your financial and chronological constraints allow you to at the time.

There are many different techniques you can use when user testing prototypes and wireframes, and no one situation or product will have the same testing requirements as another. However, the fundamental principles in this guide should be a good indicator as to what direction you should take.